by Nico Marzian | Mar 23, 2020 | AR, Artificial Intelligence, Augmented Reality, Digitalisation, Education, Extended Reality, Industrial Internet of Things, Industry 4.0, Learning, Logistics, Manufacturing, Mixed Reality, Mobility, Virtual Reality, VR, XR

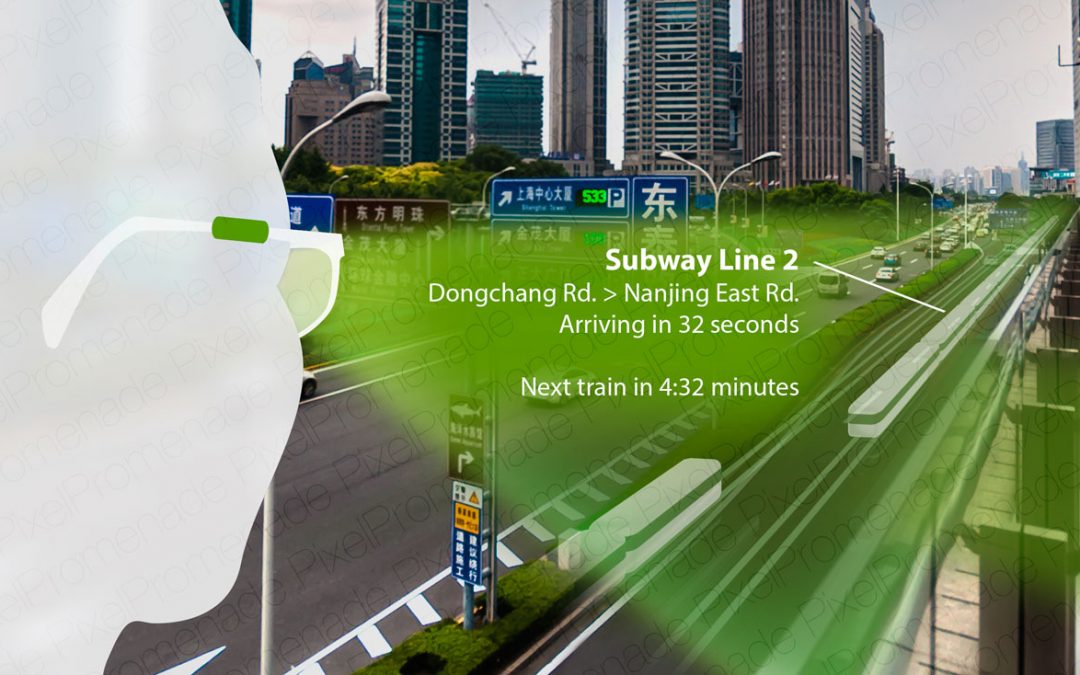

Making the Invisible Visible with AR Augmented Reality, or AR for short, will change how we experience, measure, interpret and understand our world and also how we interact with it. AR will certainly challenge us – sometimes less, sometimes more significantly – but it...

by Nico Marzian | Apr 11, 2019 | Aerospace, Artificial Intelligence, Digitalisation, Industrial Internet of Things, Industry 4.0, Logistics, Mobility

Do Autonomous Vehicles Need to Decide in Ethically Difficult Situations? Smart Urban Infrastructure for Secure Mobility & Logistics Vehicles to Decide on Whom to Spare In expert interviews, conference talks, or articles like this one by MIT’s...